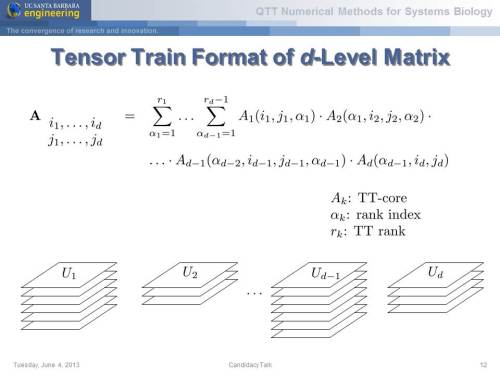

One of probable approaches to build graphical models with categorical variables is tensor decomposition:

http://bayesgroup.ru/wp-content/uploads/2014/05/icml2014_NROV-1.pdf

Notably both tensor decomposition (tensor train format) and method to use it for graphical models were developed at my faculty, though by different people at different chairs.

One more interesting question is interpretation of those hidden variables emerging in the middle.

At this moment I'm thinking over possibility to build this into GB-train, since trained model for each event provides sequence of binary visible variables. In principle, this may be written in quite simple way. Provided, that $x_i$ is boolean variable corresponding to $i$th cut in the tree (in the train, to be more precise).

For instance, one can write partition function as $$ Z = A_1[x_1, y] A_2[x_2, y] \dots A_n[x_n, y] $$ or as $$ Z = A_1[x_1] B_1[y] A_2[x_2] B_2[y] \dots A_n[x_n] B_n[y] $$

In both cases it's quite simple to estimate posterior probability since we have only limited set of options (targets) to be checked. But which one should be preferred and why?

No comments :

Post a Comment